JSON Web Token (JWT) is a standard RFC 7519 for exchanging cryptographically signed JSON data. It is probably the most popular current standard of authorization on the web, especially when it comes to microservices and distributed architecture.

As a developer, when you are asked to implement a modern web application, you may need to break it down into independent services. Independent services and distributed architecture have many advantages. One thing that you will need to think about is how your services will know that users are allowed to use them.

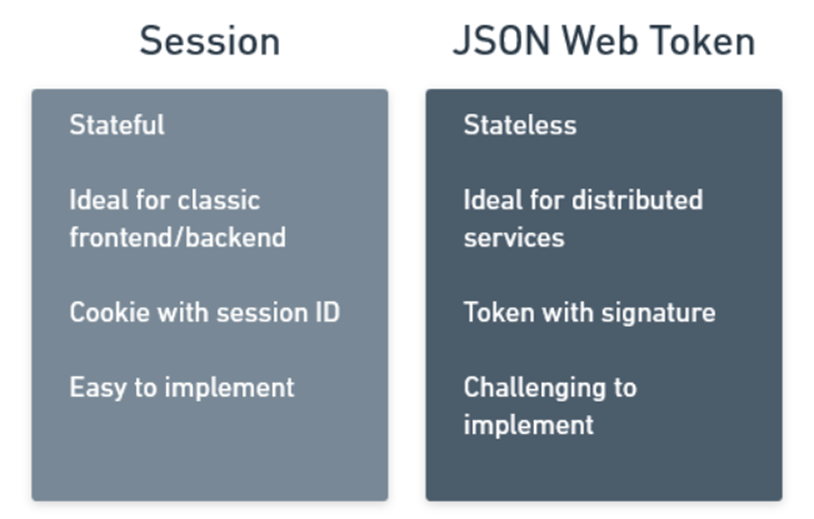

With stateful session management, your solution would be to create a user session that is shared among all parts of the system. But with a growing distributed system, sharing a session can be quite challenging.

The alternative to stateful session management is passing a stateless JSON Web Token which will represent an access token or an identity token. It will hold claims that allow your services to authorize their users and it will use the magic of cryptography to ensure that the token is authentic and has not been tampered with.

This way your services don’t need to share a stateful session, they only need to trust the token that they are given.

Standard Sessions

If you have been around for a while like me, you know that the standard approach on the web has been the use of session and session-based cookies.

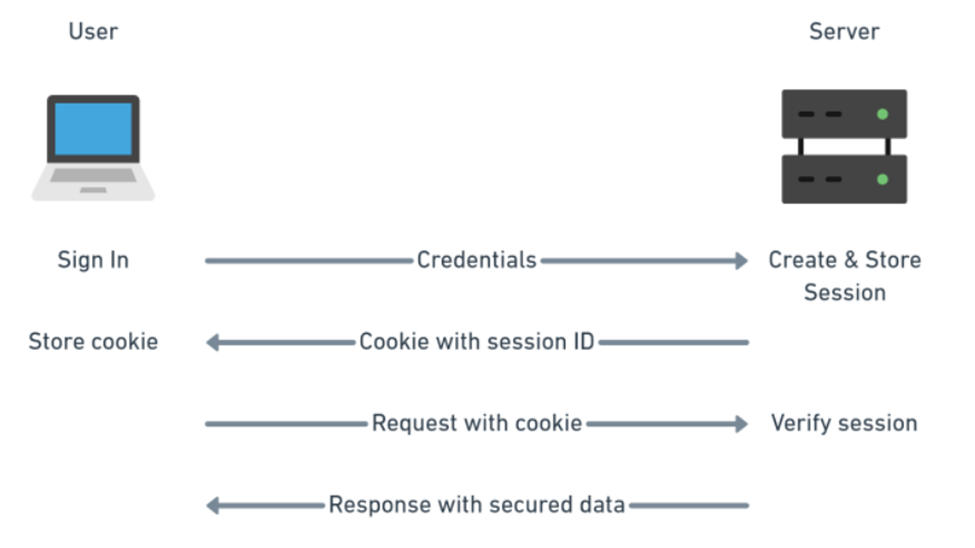

Users would sign in with their credentials and the server would give them back a cookie with their session ID. That cookie is then sent by the user with every request to authorize the user.

Nowadays that process is so automated that you barely need to write any code to support it and browsers know to automatically send the session cookie with every request themselves. it is super convenient.

The above diagram should feel fairly familiar and simple and it is what websites have been doing for a long time.

For a simple website, it is far easier to implement standard session management which is well supported by libraries on the server, and cookie management in the browser.

What is JWT?

JWT is simply a signed JSON intended to be shared between two parties. The signature is used to verify the authenticity of the token to make sure that none of the JSON data were tampered with. The data of the token themselves are not encrypted.

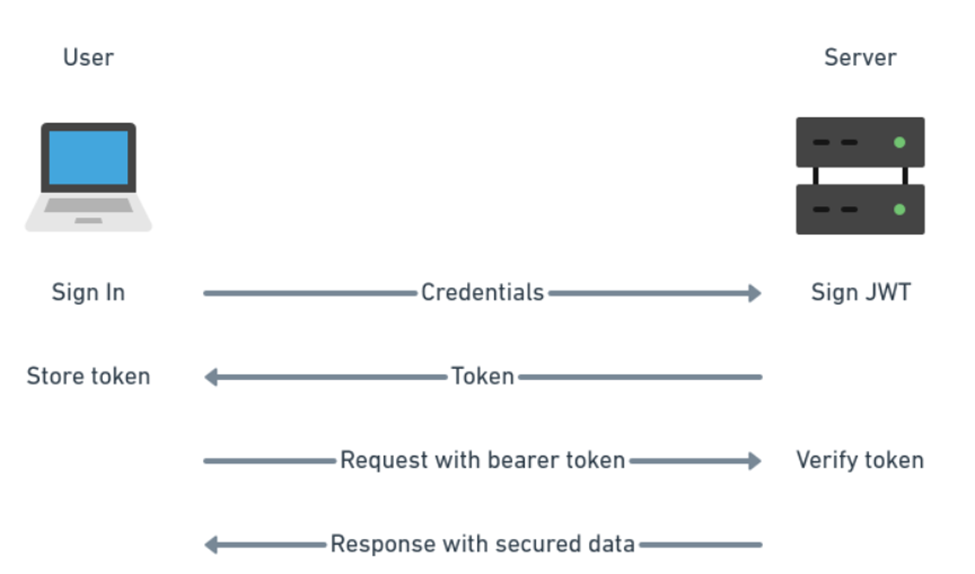

The method of authenticating users does not change with JWT. You can still use a user name and password (although you should use something more secure like two-factor authentication or DID Auth). The difference is only in how you manage the user authorization (how you let your service know that the user has permission to do something).

On the server, you verify the token signature and get access to the JSON data directly which is much simpler for distributed architectures.

In your web application frontend, your code needs to manage how the token is stored in the browser (cookie, session storage, or local storage) and how it is passed with requests to the server (as authorization bearer header).

If you compare the diagrams for session-based authorization and JWT, you will notice that the principle is very similar. The main reason for using JWT is for the client-server communication to remain stateless.

JWT gained popularity because statelessness made it easier to design independent services without having to deal with shared session management.

Where to store the JWT token in the browser?

You have three options here really. You either use cookies, web storage, or in memory. The most commonly used option seems to be local storage.

JWT in a cookie

Cookies have the advantage that they are automatically sent together with each request so you don’t need to deal with the authorization header.

Cookies are still open to Cross-Site Request Forgery (CSRF)attacks because of which you should also implement CSRF tokens. CSRF token is a random string sent as a cookie with each request and it is different for each request.

You should also use httpOnly flag to make the cookie available only server-side. A cookie with the HttpOnly attribute is inaccessible to the JavaScript document.cookie API; it is sent only to the server.

A cookie with the Secure attribute is sent to the server only with an encrypted request over the HTTPS protocol (however, on localhost only, you can still use HTTP).

JWT in web storage: Local storage vs session storage

The difference between these two is that local storage is more permanent. Session storage is cleared when the user closes the website window. Local storage data have to be explicitly deleted.

Unlike cookies, local storage is sandboxed to a specific domain and its data cannot be accessed by any other domain including sub-domains. But remember that you are still vulnerable to Cross-Site Scripting (XSS). Both cookie and web storage solutions are vulnerable to XSS.

Local storage is used the most in JWT implementations. However, session storage is the more secure option here.

With localStorage JWT is not passed with each request automatically, and you need to pass it to the server through an authorization header yourself.

JWT in memory

The most secured solution here is to store JWT in memory of your single-page application. This means that you end up storing the token in a variable in JavaScript without additional persistence.

This comes with some limitations. You cannot implement a single-sign-on (SSO) and each tab or open window in a browser will require its own sign-in because JavaScript memory is not shared. However, the sharing issue can be worked around by the use of a refresh token.

This solution is of course still vulnerable to Cross-Site Scripting like all the other solutions.

If you are building a standard frontend/backend website, use standard session management. If you are building distributed system with services, implement JWT authorization.

References